Research - TEDS Validate

Abstract

The TEDS-Validate project aims at answering the question of whether research findings brought forward by measurement instruments to test professional competence of mathematics teachers have predictive validity for the quality of their instruction and the learning progress of their students. TEDS-Validate strongly relies on previous work on the quality-controlled development of instruments measuring professional competence of mathematics teachers that was conducted in the context of TEDS-M, TEDS-FU, and TEDS-Instruction. TEDS-Validate examines the last part related to predictive validity. It is a joint project of the University of Hamburg, the University of Cologne, and the CEMO (Centre for Educational Measurement of the University of Oslo). It will be conducted in cooperation with Thuringia Institute for Teacher Professional Development, Curriculum Development and Media (Thüringer Institut für Lehrerfortbildung, Lehrplanentwicklung und Medien; Thillm) and is supported by the project "Kompetenztest.de" of the Friedrich-Schiller-University Jena. TEDS-Validate is conducted in Thuringia. In addition it is conducted in Saxonia and Hesse.

Aims

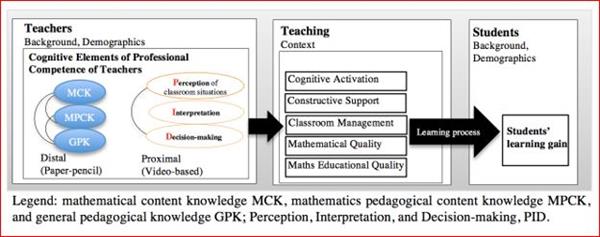

The project TEDS-Validate aims at answering the question of whether research findings brought forward by measurement instruments to test professional competence of mathematics teachers have predictive validity for the quality of their instruction and the learning progress of their students. The instruments, which will be used, have been developed by the comparative „Teacher Education and Development Study: Learning to Teach Mathematics“ (TEDS-M) (Blömeke, Kaiser & Lehmann, 2010a, b) and measure the professional competencies of future mathematics teachers. If predictive validity of teachers’ competencies for quality-oriented instruction and students’ achievements can be confirmed, then such assessments will fulfill being objective, reliable, and valid and enable examination of teacher effectiveness in future higher education research. The TEDS-M measurement instruments have already been validated. They capture the central cognitive elements of mathematics teachers’ professional competence (Shulman, 1987; Baumert & Kunter, 2006): mathematical content knowledge (MCK), mathematical pedagogical content knowledge (MPCK), and general pedagogical knowledge (GPK). However, it remains an open question whether predictive validity can be assumed with regards to the mastering of professional tasks that are relevant during teaching as the core task of teachers.

Another objective of the project will be to answer the question which forms of teacher knowledge – declarative (“knowing that…”) and procedural (“knowing how…”) – are acquired and where during teacher preparation and whether such forms are significant for teaching. The TEDS-M measurement instruments measure declarative knowledge. This is acquired during teacher preparation and can be considered as part of a teacher’s knowledge base needed for teaching. By contrast, procedural knowledge depends on practical experience and is related to situations and performance in class. That is why in the follow up of TEDS-M, the so-called TEDS-FU study, innovative forms of situation-specific teacher competence measures were developed (“video-cued testing”). Based on expert reviews, these test instruments have been proven to be reliable and valid as well as being suitable for capturing situation-specific skills. If they also turn out to have predictive validity, then in-depth analyses on the acquisition and on the relation of declarative and procedural knowledge during teacher preparation as well as on their differential predictivity can be carried out in order to get evidence on designing teacher education at university, especially for the theory-practice-relationship of opportunities to learn. Such evidence will be related to mathematics teacher preparation, but still they contain high potential for generalization.

Theoretical Framework

The international state of research on the development of professional competence during teacher preparation has strong gaps. Although all studies assume a chain of effects such as teacher preparation – teacher competence – instructional quality – student progress, there is no empirical evidence whether teacher competencies that teacher had acquired during teacher preparation have an influence on the quality of their instruction and the learning progress of their students. Also the relationship of teacher categories content knowledge, pedagogical content knowledge, and general pedagogical knowledge on instructional quality and student learning has not yet been modelled simultaneously, neither have situation-specific cognitive skills been accounted for.

To make it concrete, in the project TEDS-Validate the following research questions will be investigated:

(1) Can we provide empirical evidence that TEDS-M and TEDS-FU measurement instruments have predictive validity for teaching mathematics at high quality? We expect that MCK, MPCK, and GPK as well as video-based measures significantly impact instructional quality and correlate with the learning gain of students.

(2) Do situation-specific skills (as measured via video-based assessments) contribute to explain instructional quality and learning gain of students – in addition to the effects of professional knowledge of teachers (as measured via paper-pencil tests), i.e., do they have added value? We expect that video-based skills of perception, interpretation, and decision-making correlates higher with instructional quality and students’ learning gain than knowledge-based tests (MCK, MPCK, GPK). Moreover, we expect that the relationship between video-based measures and learning gain will be mediated by instructional quality.

Study Design

TEDS-Validate will be conducted in Thuringia in 2016. About 150 teachers will participate. Evaluation of cognitive and situative-specific tests will take about three hours testing time and will be provided online. Moreover, instructional quality of a subgroup of teachers (n=25) will be captured through classroom observations over four lessons. A recently developed rating instrument will be used. Students’ learning progress will be measured via central assessments (Lernstandserhebungen) that regularly take place during grade 6 and 8 in Thuringia. These assessments are aligned on the national educational standards for mathematics.

Data will be provided through the project “kompetenztest.de” by the University of Jena. After data collection and cleaning, descriptive statistics will be computed. Test data will be scored using Item-Response-Theory. Instructional quality scales will be computed according to the procedure applied in other studies. We will examine our hypotheses based on covariance and factor analyses as well as by using structural equation modelling on the level of latent variables.

Access to the field will be supported through the cooperation with the Thuringia Institute for Teacher Professional Development, Curriculum Development and Media (Thüringer Institut für Lehrerfortbildung, Lehrplanentwicklung und Medien, Thillm). Data collection will be conducted online with the software package “UniPark”. Incentives will be given to the teachers, e.g., by offering professional teacher development workshops. After publication of first project findings, instruments of the project and project data will be offered to the scientific community according to APA-standards.

Project leaders Prof. Dr. Gabriele Kaiser (guidance of research group), Universität Hamburg

Prof. Dr. Johannes König, Universität zu Köln

Prof. Dr. Sigrid Blömeke, Universität Oslo (Norwegen)

Project members Anne Hardt, Universität Hamburg

Dr. Hannah Heinrichs, Universität Hamburg

Caroline Nehls, Universität zu Köln

Natalie Ross, Universität Hamburg

Ute Suhl, Universität zu Köln

Homepage https://www.teds-validierung.uni-hamburg.de/

Time frame 01.02.2016 – 31.08.2019